Deep Declarative Networks

ECCV 2020 Tutorial, 28 August, Glasgow, UK (online)

Program | Speakers | Organizers | Resources

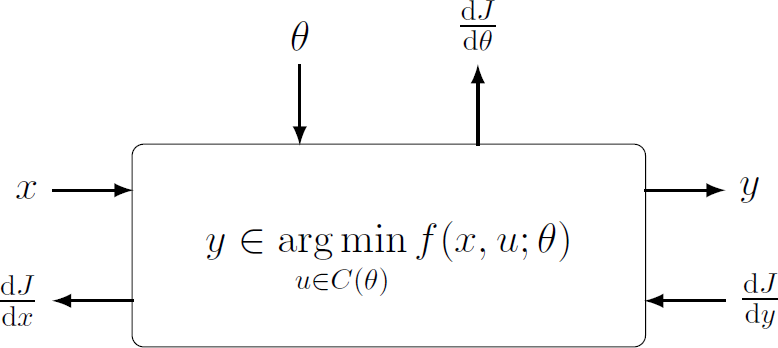

Conventional deep learning architectures involve composition of simple and explicitly defined feedforward processing functions (inner products, convolutions, elementwise non-linear transforms and pooling operations). Over the past several years researchers have been exploring deep learning models with embedded differentiable optimization problems (Agrawal et al., 2019; Amos and Kotler, 2017; Gould et al., 2016), and recently these models have been applied to solving problems in computer vision (e.g., Fernando and Gould, 2016; Cherian et al., 2017, Santa Cruz et al., 2018, Lee et al., 2019, Wang et al., 2019) and other areas of machine learning.

Since these networks define the behavior rather than algorithmic implementation of individual processing layers they are called deep declarative networks (DDNs), borrowing nomenclature from the programming languages community (Gould et al., 2019). Importantly, the gradient of the solution to the optimization problem with respect to inputs and parameters can be calculated even without prior knowledge of the algorithm used for solving the optimization problem in the first place, allowing for efficient backpropagation and end-to-end learning.

Topics

This tutorial will introduce deep declarative networks and their variants. We will discuss the theory behind differentiable optimization, technical issues that need to be overcome in developing such models and applications of these models to computer vision problems. Additionally, the tutorial will provide hands-on experience in designing and implementing a custom declarative node. Topics include:

- Declarative end-to-end learnable processing nodes.

- Differentiable convex optimization problems.

- Declarative nodes for computer vision applications.

- Implementation techniques and gotchas.

Program

The tutorial is available on ECCV2020 online platform and on the ANU CVML YouTUbe channel. It is a playlist that consists of six videos that are subdivided into two main modules - the Deep Declarative Network module (DDN) and the Differentiable Convex Optimiztaion Module (CO):

- DDN - Basic concepts and Theory (Stephen Gould). ECCV2020 platform, YouTube

- DDN - Applications (Dylan Campbell). ECCV2020 platform, YouTube

- DDN - Hands-on coding using the DDn codebase (Dylan Campbell). ECCV2020 platform, YouTube

- CO - Background and basic concepts(Steven Diamond). ECCV2020 platform, YouTube

- CO - Implementation considerations and applications (Brandon Amos). ECCV2020 platform, YouTube

- CO - Hands-on coding with CVXpy (Akshay Agrawal). ECCV2020 platform, YouTube

Join us at one of the live Q&A sessions on Friday, August 28th:

Speakers

ANU

ANU

Stanford

Facebook AI

Stanford

Organizers

Contact: eccv2020@deepdeclarativenetworks.com

Resources

- CVXPY: Convex optimization, for everyone

- CVXPYLAYERS: Differentiable convex optimization layers

- DDN: Deep Declarative Networks

- OPTNET: Differentiable Optimization as a Layer in Neural Networks

- DDN hands-on coding session notebook: Notebook presented in Dylan’s talk.

- CVXPYlayers hands-on coding session notebook: Notebook presented in Akshay’s talk.